Generative AI doesn’t create new data risks. It makes the already existing risks much bigger.

Today, many enterprises are quickly rolling out AI tools like Copilots, custom LLMs, RAG-based applications, and AI-powered search. But these systems are running on top of data environments that were never built to be managed at this speed or scale. The result is predictable.

Nowadays, sensitive data is present in places and systems where it should not be. For example, you give your spreadsheet to AI for review, and it may get stored on its servers and could be accessed or shown to others.

So, is AI the main issue? No, the issue is visibility. Most organizations do not fully know where their sensitive data lives, who can access it, or how it is moving. AI makes this harder because it is designed to find data, use data, and generate outputs from it at machine speed.

This is where Data Security Posture Management or DSPM for AI data protection, comes in. It is built to solve exactly this problem.

88% of companies now use AI in at least one function. As AI adoption grows like this, DSPM (Data Security Posture Management) is a must for organizations to manage and control data risk linked to AI.

To explain to you better how data visibility in AI can be dangerous, we have discussed the risks in detail in the next section.

The New Data Risk Surface Created by AI

To understand why DSPM for AI matters, you first need to see the new kind of risk AI brings.

Imagine one of your employees pastes a customer contract into a public AI assistant to get a quick summary. That data, which may include PII, financial terms, or sensitive business details, has now left your environment.

Now consider, a Copilot-style assistant is used in Microsoft 365 and has wide access to SharePoint. It can easily bring up files and data that were always there, but hard to find until now.

So, what happens when such activities occur in your organization:

- Accidental data exposure through AI prompts: Employees often enter sensitive data like customer info, financial details, or business plans into AI tools without knowing if the data could be stored, logged, or reused.

- LLM fine tuning on unclassified data: AI teams use internal datasets for training but may not know if the data includes regulated information like Aadhaar numbers, PAN details, health records, or confidential business data.

- Model memorization and inversion attacks: Large language models can sometimes repeat parts of their training data. If that data includes sensitive information, it can lead to data leaks.

- Shadow AI: Employees using personal accounts on ChatGPT, Gemini, or similar tools with company data create a gap in data governance that many organizations are just starting to notice.

As per the Netskope Threat Labs Cloud and Threat Report 2026, companies see about 223 cases every month where employees share sensitive data with AI apps. In the most affected companies, this number can go up to 2,100 incidents per month.

What all these risks have in common is ‘You cannot see them without proper data discovery and classification’. This is exactly where DSPM helps.

What is DSPM?

Data Security Posture Management (DSPM) is a security approach that helps organizations discover, classify, and manage sensitive data across cloud storage, databases, SaaS applications, and data lakes.

It gives clear visibility into where sensitive data exists, what type of data it is, and who has access to it. This helps organizations reduce risk by ensuring that sensitive data is properly governed and only accessible when needed.

In AI environments, this visibility becomes even more important. Since AI systems rely heavily on data, organizations need to know exactly what data is being used and whether it is safe to include in AI workflows.

Why Traditional DLP Falls Short for AI

Data Loss Prevention tools were built for a world with known channels like email gateways, USB ports, and web proxies. They work by checking content as it moves through these paths and blocking it if it breaks a policy.

AI changes this model in two big ways.

First, AI interactions are conversational and contextual, not file based. A DLP tool can block an attachment with a PAN number from being sent over email. But it cannot easily catch a sentence in an AI prompt that describes the same customer’s financial details.

Second, the risk with AI is often not about data moving out, but about data going in. Sensitive data may not leave your organization in a way DLP can see. Instead, it gets fed into a model, which might later reveal it in ways you did not expect. Traditional DLP cannot see:

- what data is going into an AI pipeline

- how that data is being used inside the pipeline

DSPM works at a different and earlier stage. Instead of trying to stop data at the point of misuse, it focuses on making sure sensitive data is:

- discovered

- classified

- properly governed

This happens before the data ever reaches an AI system. The idea is simple. You cannot manage what you cannot see, and you cannot protect what you have not classified.

How DSPM Addresses AI Data Risk

Now, comes the DSPM part. This section explains DSPM role in safeguarding AI and how its core capabilities help in avoiding or eliminating data leak risks.

Discovery of AI-adjacent data stores

A strong DSPM solution keeps scanning all your data sources like cloud storage, databases, SaaS apps, and data lakes. It helps you find where sensitive data actually lives. For AI governance, this means you know which datasets have regulated or sensitive data before they are used in any AI workflow. You can also look at DSPM components to understand how discovery works across both structured and unstructured data.

Classification that sets AI guardrails

Once the data is found, DSPM classifies it based on sensitivity like PII, financial data, health records, or intellectual property. This classification helps you apply the right policies. For example, sensitive data can be kept out of AI training sets, blocked from AI tools, or flagged for review before use.

Monitoring access to AI-connected data stores

DSPM keeps track of who or what is accessing sensitive data. If an AI system or its service account starts accessing data it never used before, DSPM spots this unusual activity and can alert you or block it in real time.

Flagging sensitive content in AI pipelines

For teams building or fine-tuning models, DSPM scans the training datasets and highlights sensitive content before it gets used. This is important for compliance with rules like India’s DPDP Act, where personal data used in AI must follow the same purpose and consent requirements as any other data processing.

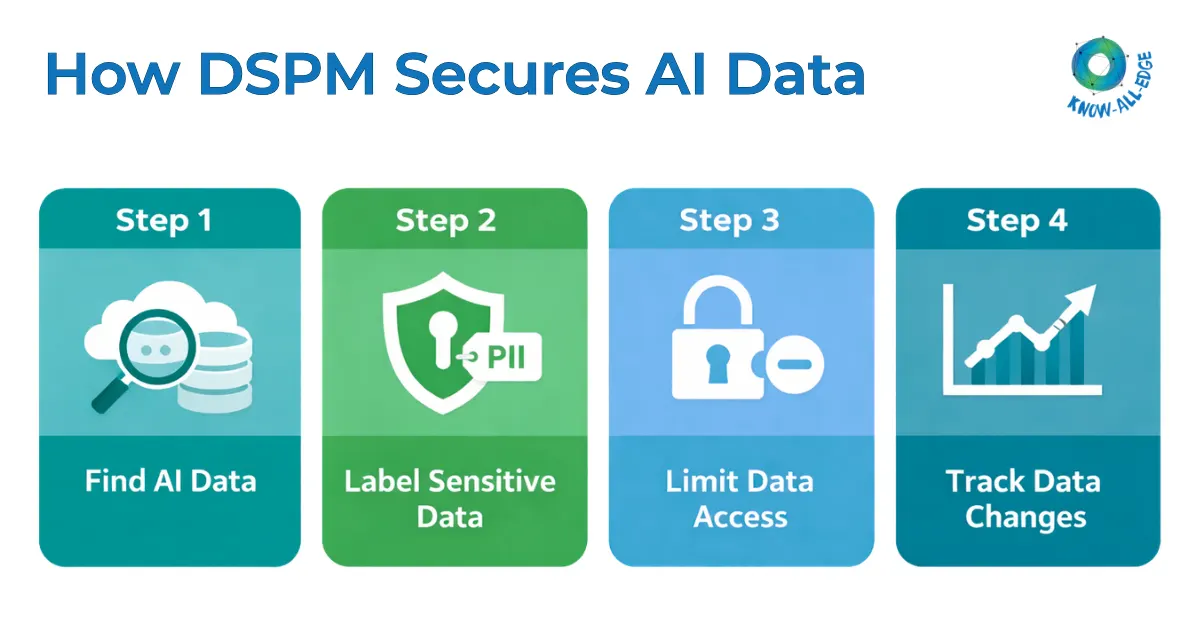

DSPM for AI Data Protection Workflow

Turning DSPM capabilities into a working AI data governance workflow can be done in four steps.

Step 1: Map all data stores connected to AI tools

Before you can control how AI uses data, you need a clear view of all the data sources your AI tools can access. This includes cloud storage, collaboration platforms, internal databases, and third-party data sources connected to your AI setup. DSPM helps automate this mapping and keeps it updated as new connections are added.

Step 2: Classify content by sensitivity

Apply classification policies to all data that AI can access. Focus first on data stores that are likely to hold regulated data like HR systems, customer databases, financial records, and legal repositories. Classification does not have to be perfect from day one. A risk-based DSPM best practices approach focuses on covering the highest-risk areas first.

Step 3: Enforce access controls on AI-accessible data

Use DSPM’s entitlement analysis to review and limit access for AI systems and the accounts they use. Follow the principle of least privilege. AI tools should only access the data they truly need, nothing more. Access should be reviewed regularly, not just when the system is first deployed.

Step 4: Monitor for drift as AI use expands

AI environments keep changing. New tools get added, existing ones gain new features, and more data connections are created. DSPM for AI data protection provides continuous monitoring, so your data governance keeps up as your AI environment grows.

DSPM and Shadow AI

Shadow AI is quickly becoming one of the toughest data governance challenges today, and most organizations are still not fully prepared for it.

When an employee uploads a customer proposal to a public AI tool to improve the language, or pastes database data into an AI coding assistant, they are not being careless. They are simply trying to solve a problem faster. But this creates real risks for data governance.

DSPM helps by giving clear visibility into what sensitive data exists and where it is stored. When that data starts moving, through integrations, SaaS tools, or unusual access patterns, DSPM can detect it and trigger a response.

This works best when DSPM is used along with CASB and SWG tools. DSPM provides the data context, while CASB and SWG track which external AI tools are being used and can block or alert when sensitive data is shared.

DSPM for AI Compliance

AI regulations are evolving quickly, and this has important implications for Indian enterprises.

India’s DPDP Act applies to all personal data processing, including AI use. Whether data is used to train models, fine-tune LLMs, or generate outputs, the same rules apply, such as lawful purpose, consent, data minimization, and security safeguards.

In simple terms, organizations cannot use AI with personal data unless they clearly know where the data is, what it contains, and whether its use is allowed. DSPM makes this possible by providing reliable data inventory.

Beyond India, the EU AI Act introduces additional requirements, especially for high-risk AI systems. These include proper documentation of training data and checks for bias. A strong DSPM setup helps meet both DPDP and EU requirements by creating a single, consistent audit trail.

For CISOs and compliance teams, this is a major advantage. The same DSPM program that reduces breach risk and supports compliance can also provide the evidence needed for AI regulations, without requiring a separate effort.

This approach is far more effective than strict restrictions, which employees often find ways around. Instead, it enables smarter, context-aware control over how data is used with AI.

The Road Ahead: AI-Native DSPM

The relationship between AI and DSPM is not one-sided. While DSPM helps govern AI data, AI is also improving DSPM tools.

Modern DSPM solutions use machine learning to improve classification accuracy, reduce false positives, and detect sensitive data patterns more effectively. They also use behavioral analysis to understand how data is normally accessed, making it easier to spot unusual activity.

Another emerging capability is predictive risk scoring, where systems identify data that could become a risk before it actually does.

Looking ahead, automation will play a bigger role. DSPM tools may soon be able to detect when sensitive data is used in AI systems without proper controls, automatically restrict access, and notify the right teams without human involvement.

For Indian enterprises investing in AI, the takeaway is clear. DSPM is not just a tool for current data security. It is a key foundation for building secure, compliant, and scalable AI systems as both technology and regulations continue to evolve.

Conclusion

AI adoption is growing fast, but it is building on data systems that were not designed for this new reality. The organizations that will gain the most from AI, and face fewer risks, are the ones that have done the basics right. They know what sensitive data they have, where it exists, who can access it, and how it is being used. They also keep track of changes in real time.

That is exactly what DSPM for AI data protection does. In an AI-first world, it is no longer optional.

If you are planning to build AI-ready data governance and wondering how to access DSPM for AI, Know All Edge can help. We can assess your current data security posture and guide you on the right DSPM approach for your environment. Speak to our team for your DSPM requirements.

Frequently Asked Questions

Is DSPM only useful for large enterprises using AI?

No, DSPM is useful for any organization using AI and handling sensitive data, regardless of size. Even smaller teams can face data exposure risks.

How is DSPM different from data security tools like encryption?

Encryption protects data, but DSPM focuses on visibility and governance. It helps you understand where sensitive data is before applying protections.

Does DSPM slow down AI development?

No, DSPM enables safer AI adoption by ensuring data is properly managed, without blocking innovation or slowing down development processes.

Can DSPM work with existing security tools?

Yes, DSPM integrates with tools like CASB, DLP, and cloud security platforms to provide a more complete data protection strategy.