What is Zero Trust AI? It is the application of zero trust security principles “never trust, always verify” to the new class of actors that AI has introduced into enterprise environments: autonomous agents, language models, machine-to-machine APIs, and AI-generated outputs that feed into business processes.

The challenge is that traditional zero trust was designed around human users and their devices. An employee authenticates, their device is checked for compliance, and access is granted to a specific application. The model works because humans are relatively predictable: they have defined roles, they access predictable resources, and their behavior can be baselined.

AI agents are none of those things. A single agent might call dozens of APIs, query multiple databases, generate content that flows into other systems, and spawn sub-agents — all within a single user request. The access surface is enormous, the behavior is variable, and the blast radius of a compromised or manipulated agent is potentially catastrophic.

In March 2026, Microsoft announced Zero Trust for AI, extending their existing zero trust reference architecture to cover AI systems specifically.

This guide covers what zero trust AI security means in practice: how to apply proven zero trust principles to AI agents, models, and APIs in your organization.

Why AI Systems Break Traditional Zero Trust Assumptions

Traditional zero trust implementation assumes that access principles are identifiable, that their behavior can be baselined, and that they operate within defined roles. AI systems challenge all three assumptions:

AI agents do not have stable identities

A human employee has a user account. An AI agent may be instantiated thousands of times, may run as different service accounts across environments, or may exist only for the duration of a task. Traditional identity controls designed for persistent human accounts do not map cleanly onto ephemeral AI processes.

AI behavior is not predictable

Human users access the same applications in roughly the same patterns. Anomaly detection works because baselines are stable. AI agents, particularly those using large language models, generate emergent behavior. An agent prompted to “research competitors” might attempt to access resources that no previous access request anticipated. Baselining normal behavior for AI agents is genuinely difficult.

AI systems are vectors for prompt injection attacks

A compromised or maliciously prompted AI agent can exfiltrate data through what appear to be normal API calls, escalate its own access by manipulating tools it controls, or take actions that a human reviewer would have flagged as unusual but that the AI processes as legitimate instructions. This is a novel threat class that traditional access controls were not designed to address.

AI-to-AI communication is largely unauthenticated

Multi-agent architectures — where one AI agent orchestrates others — often use no authentication between agents. If an orchestrator agent is compromised, it can issue arbitrary instructions to sub-agents. The attack surface of a multi-agent system is the product of each agent’s access surface, not the sum.

The Five Zero Trust AI Pillars

Zero trust and AI together work by applying the five core zero trust pillars — identity, devices, networks, applications, and data — to AI-specific actors and workflows. Here is what each pillar means in an AI context:

(1) AI Identity: Cryptographic workload identity

Every AI agent, every model endpoint, and every AI-powered service requires a verifiable, cryptographic identity that does not depend on network location. Frameworks like SPIFFE (Secure Production Identity Framework for Everyone) provide a standards-based approach: agents receive short-lived certificates that attest to their identity, which expire and must be renewed, making stolen credentials time-limited.

AI identity should be:

- Cryptographic: not an API key that can be copy-pasted

- Short-lived: expires quickly, forcing periodic re-attestation

- Scoped: the identity is bound to a specific agent role and permission set

- Auditable: every identity issuance and use is logged

(2) Device and environment trust for AI workloads

Where is the AI agent running? A model running in an approved, hardened cloud environment is in a different trust tier than a model running on an employee’s personal device or in an unmanaged third-party environment. Zero trust AI security extends device compliance checks to include the execution environment of AI workloads — the container image, the infrastructure configuration, and the cloud account controls.

(3) Network controls for AI traffic

AI agents should not have unrestricted outbound network access. Apply the same ZTNA principles to AI workloads that you apply to human users: agents should access only the specific APIs and data sources their current task requires, routed through a policy enforcement point that evaluates each request.

This prevents a compromised agent from exfiltrating data to an unauthorized external endpoint and limits the lateral movement available to an attacker who has manipulated an agent.

(4) Application access and least-privilege for AI

AI agents should receive the minimum access required to complete their current task — not broad credentials that allow access to everything their role might conceivably need. This means:

- Just-in-time access provisioning — access is granted when the task begins and revoked when it completes

- Per-task scoping — an agent analyzing sales data does not receive the same credentials as an agent managing customer records, even if both run under the same service account label

- Tool and API allowlisting — agents should only be able to call explicitly approved tools, not arbitrary endpoints

(5) Data protection for AI inputs and outputs

AI systems interact with data in ways that traditional DLP systems were not designed to monitor. Sensitive data flows into model prompts, appears in agent memory, and emerges in outputs that may be displayed to users, stored in databases, or fed into other systems.

Zero trust AI security applies data controls to these flows:

- Classify data before it enters AI systems — do not feed unclassified sensitive data into models without explicit policy approval

- Monitor AI outputs for sensitive data exfiltration — this is a new DLP use case that requires AI-aware tooling

- Log all data accessed by AI agents with sufficient context to reconstruct what the agent did with it

AI and ML as Enablers of Zero Trust Maturity

The relationship between AI and zero trust runs in both directions. Zero trust protects AI systems. And AI and ML significantly enhance the maturity of zero trust implementations in 2026.

AI-powered threat detection

Traditional security systems rely on predefined rules and signatures — inadequate against sophisticated and evolving threats. Machine learning models, trained on historical network and identity data, continuously monitor for deviations from established baselines. They can identify anomalous access patterns that indicate account compromise, unusual data access that suggests insider threat activity, and lateral movement patterns that signal an active intrusion.

Critically, AI-powered detection operates at a scale and speed that human analysts cannot match. When an anomaly is detected, automated response — isolating an affected network segment, revoking a compromised credential, triggering step-up authentication — can happen in seconds rather than hours.

Dynamic access control

Static access policies are a weakness in any zero trust implementation. A policy that correctly governs access for typical usage patterns will have exceptions — users who travel, roles that evolve, seasonal access requirements. ML enables truly dynamic access control: access decisions that incorporate real-time risk signals, adapt to context, and adjust authentication requirements proportionally to assessed risk.

As the Zscaler ZTNA framework articulates: if a user who typically accesses systems during UK business hours suddenly attempts access from Hong Kong at 2 a.m. on a Sunday, that warrants suspicion regardless of whether the credential is valid. ML establishes the behavioral baseline that makes this judgment possible at scale.

Automated policy management

Managing access policies across a complex enterprise — thousands of users, hundreds of applications, dozens of regulatory requirements — is a manual process that does not scale. ML can analyze historical access data to identify common patterns, automatically generate policies that reflect actual usage, and continuously monitor policy effectiveness, flagging configurations that are no longer appropriate as environments evolve.

Predictive security for AI-specific threats

ML models applied to AI security can identify patterns that precede attacks: unusual sequences of API calls that suggest model probing, access patterns that indicate prompt injection attempts, or data access behaviors that suggest an agent has been manipulated. Predictive controls can trigger alerts or restrict access before damage occurs.

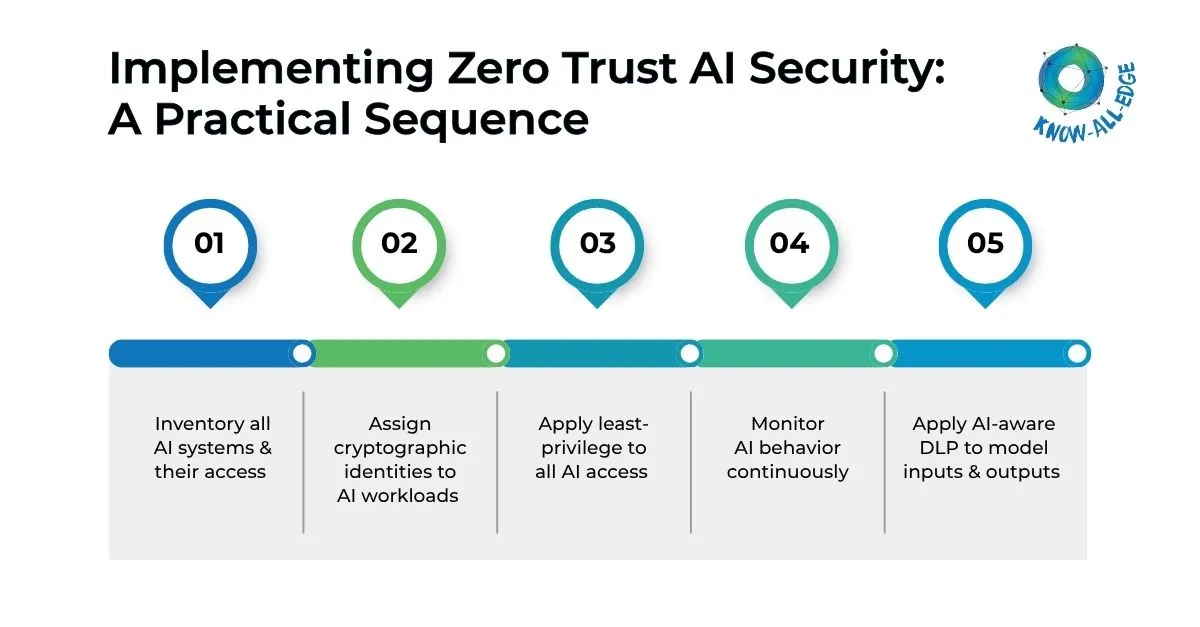

Implementing Zero Trust AI Security: A Practical Sequence

Let’s break down zero trust AI security into practical steps you can actually implement.

Step 1: Inventory all AI systems and their access

You cannot apply zero trust to AI systems you have not discovered. Conduct an audit of every AI agent, model deployment, and AI-powered integration in your environment. For each, document: what data it accesses, what APIs it calls, what credentials it uses, and who is responsible for its governance.

In most organizations, this inventory reveals a significant AI sprawl problem — models and agents deployed by individual teams, often using shared or overly-permissive credentials, without central visibility.

Step 2: Assign cryptographic identities to AI workloads

Replace static API keys and shared service accounts with cryptographic workload identities. This is the foundational step for AI identity in a zero trust architecture. Short-lived credentials that expire and must be renewed are significantly harder for attackers to exploit than long-lived API keys.

Step 3: Apply least-privilege to all AI access

Audit what each AI system currently has access to versus what it actually needs. Revoke excess access. Implement just-in-time provisioning for tasks that require elevated access. This single step typically reduces AI attack surface significantly.

Step 4: Monitor AI behavior continuously

Deploy monitoring that captures what AI agents are doing — which APIs they call, what data they access, what outputs they generate — with sufficient granularity to detect anomalies. Establish behavioral baselines for each agent type and alert on deviations.

Step 5: Apply AI-aware DLP to model inputs and outputs

Traditional DLP tools inspect known data formats at known control points. AI interactions are neither. Implement DLP tooling designed for AI-specific data flows: prompt inspection, output monitoring, and data lineage tracking that follows sensitive information through AI-mediated processes.

Addressing Ethical and Privacy Considerations

Continuous behavioral monitoring of AI agents raises important questions about the data being monitored and retained. Organizations implementing AI for zero trust must:

- Clearly define what behavioral data is collected and retained, and for how long

- Ensure monitoring practices comply with applicable privacy regulations (GDPR, CCPA, and sector-specific requirements)

- Establish governance processes for AI systems making security decisions, particularly automated blocking or access revocation

- Regularly evaluate whether AI-powered security controls are introducing algorithmic bias or disproportionate impact on specific user groups

The organizations that implement zero trust AI security most effectively treat it as an ongoing governance program, not a technical deployment. The technology enables the controls. Governance, policy, and continuous review sustain them.

The Path Forward

Zero trust AI security is not a future consideration. AI agents are already operating in most enterprise environments — accessing APIs, processing sensitive data, and making decisions that affect business operations. It is not about securing AI agents with zero trust principles, but the real question is ‘how quickly’.

The implementation sequence is the same as traditional zero trust: start with identity, apply least-privilege, monitor continuously, and build maturity incrementally. What changes is the threat model — AI-specific attack vectors including prompt injection, model manipulation, and unauthorized data exfiltration through AI-mediated channels require AI-aware controls.

For 2026 and beyond, organizations that implement zero trust for AI agents alongside their broader zero trust implementation will be significantly better positioned, — not just to defend against AI-specific attacks, but to deploy AI systems confidently, knowing that the access they grant is limited, monitored, and revocable.

If you’re exploring zero trust implementation for AI, we can help you evaluate your current setup and identify the right controls for your environment. Let’s get started.

FAQs on Zero Trust for AI

What is Zero Trust AI?

Zero Trust AI is the application of zero trust security principles — never trust, always verify — to AI systems including autonomous agents, language models, machine-to-machine APIs, and AI-generated data flows. It means every AI agent requires a verifiable identity, receives only the minimum access needed for its current task, operates under continuous monitoring, and has its access revoked immediately if behavior indicates compromise or manipulation.

Why do AI agents need zero trust security?

AI agents break three core assumptions that traditional access controls rely on: they do not have stable, persistent identities like human users, their behavior is difficult to predict consistently, and they are vulnerable to prompt injection attacks that can trigger unauthorized actions, data exposure, or privilege escalation through seemingly normal API calls. This is why zero trust security for AI agents is becoming critical, as traditional perimeter-based controls were not designed to manage autonomous systems operating across dynamic environments and data sources.

What is a prompt injection attack and how does zero trust help?

A prompt injection attack manipulates an AI agent by embedding malicious instructions in its input (for example, in a document the agent is asked to summarize) causing it to take unintended actions such as exfiltrating data to an unauthorized endpoint or calling APIs outside its permitted scope. Zero trust mitigates prompt injection risk by restricting what APIs and data sources an agent can access, routing all agent traffic through a policy enforcement point, and monitoring agent behavior for deviations from established patterns.

How does AI improve zero trust security implementations?

AI and machine learning enhance zero trust in four ways: they enable anomaly detection at a scale and speed humans cannot match; they power dynamic, risk-adaptive access control that adjusts authentication requirements based on real-time context; they automate policy management by analyzing historical access data and generating policies that reflect actual usage patterns; and they enable predictive security, identifying behavioral patterns that precede attacks before damage occurs.

What is workload identity and why does it matter for AI security?

Workload identity is a cryptographic credential assigned to an AI agent, model endpoint, or automated service — analogous to a user account for human employees but designed for software processes. Unlike static API keys that can be copied and reused indefinitely, workload identities are short-lived (they expire and must be renewed), cryptographically bound to the specific workload, and scoped to a defined permission set. Frameworks like SPIFFE provide a standards-based approach. Workload identity is the foundational step for applying zero trust principles to AI systems.

How do you apply data loss prevention (DLP) to AI systems?

AI-specific DLP requires a different approach than traditional DLP because sensitive data flows through AI interactions in non-standard ways — in model prompts, agent memory, and generated outputs. AI-aware DLP tooling inspects these flows: classifying data before it enters AI systems, monitoring model outputs for sensitive data that should not be in responses, and maintaining data lineage tracking that follows sensitive information through AI-mediated processes. Traditional DLP tools that only inspect known file formats at known control points are insufficient for AI workloads.